The Managed DSPM Company

Identify critical data and assess data security posture

Classify business-critical content

Protect sensitive and regulated data

Prevent overpermissioning

CISO

VP of Cybersecurity

CTO

Oil & Gas

CISO and VP of Infrastructure

F1000 Healthcare

Software Company

Principal Architect

We protect our customers with an agentless and easy to deploy platform that can work across unstructured and structured data without requiring large security teams.

Cyberattacks targeted at big-name businesses may take up most of the headlines, but cybersecurity incidents are also on the rise...

In a business landscape where cloud migration is the norm and and the likelihood of data breaches moves from the...

Massive cloud migration and digital transformation are inarguably great for business, but managing all that data is like fighting a...

Libero nibh at ultrices torquent litora dictum porta info [email protected]

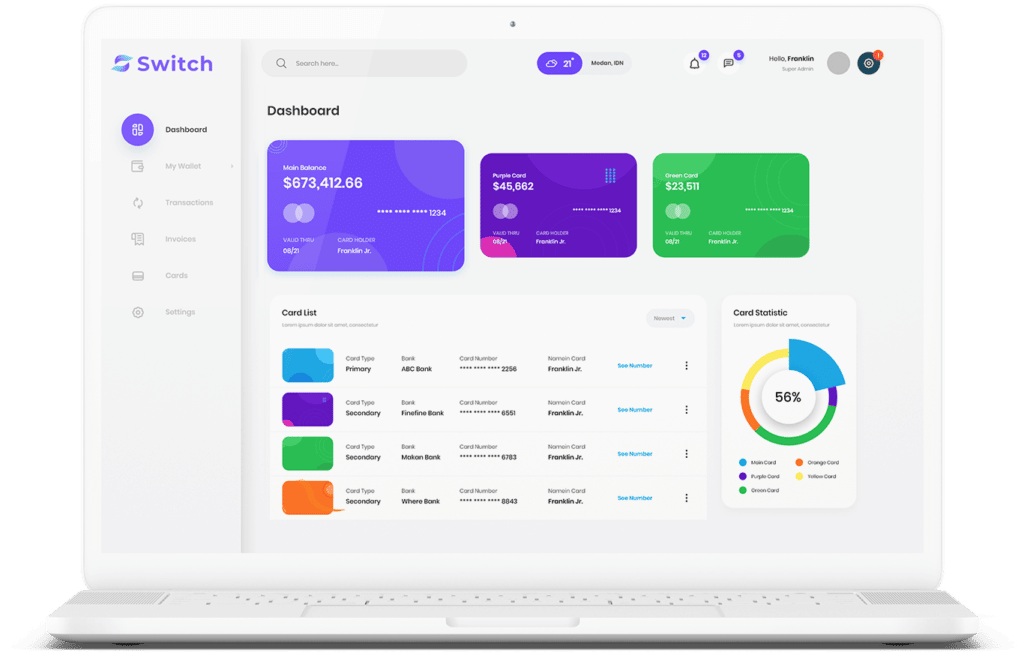

Start connecting your payment with Switch App.